It turns out descriptions like ‘subtle changes’ or ‘more complex set-up, simpler equations’ are somewhat lacking when comparing ART1 and ART2 networks. The changes involved are significant (mostly within the F1/Input/Comparison layer), with some 15 equations including ODE’s.

I think this shows that my current ART1 network is more than likely significantly less complex than the true model – as there is only one major equation (the feed-forward weight scaling equation) along with the dot product.

As I believed the ‘true’ ART2 architecture was far too complex, I have been pursuing modifying my existing ART1 network to accept real values. Once completed I will detail the required ‘modifications’ (hacks) required, but so far the network will accept real inputs – there is no comparison at this point (0 vigilance) – all 5 patterns are classed as new exemplars.

Even this ‘simple’ step required significant modification of the ART1 implementation – also perhaps revealing some errors within its logic.

This test data has been designed to have two sets of similar data (the first two pairs) and a final pair with some more significant differences – to test the effectiveness of the vigilance test.

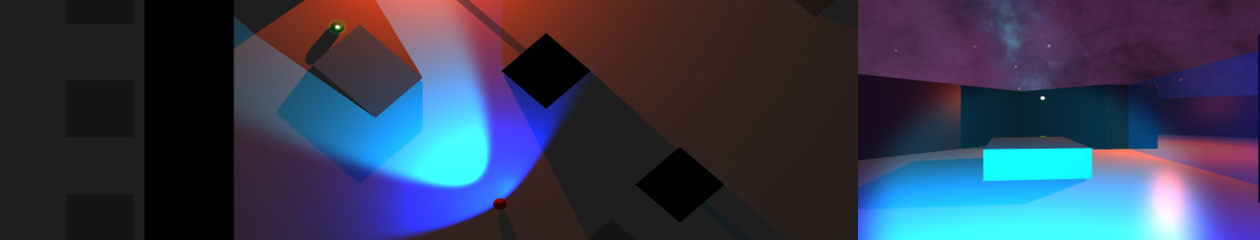

In the above image:

– white nodes represent 0’s – as the input vector has fixed length, any gaps must be filled

– blue nodes have negative values (both feedback and exemplar)

– red nodes have positive values

For non-zero nodes, the size of the sphere is the value of the feed-forward weights trained to that node – in this case they are the normalised values of the exemplar.