Source code – Unity, C#

The final part of our 4th Year of Computer Games Technology was the 2015 Abertay Showcase.

I was honoured for my project to be chosen for the Yoyo Games Prize for Applied Theory.

Over the weekend I was present to talk over my project, and it was a great experience.

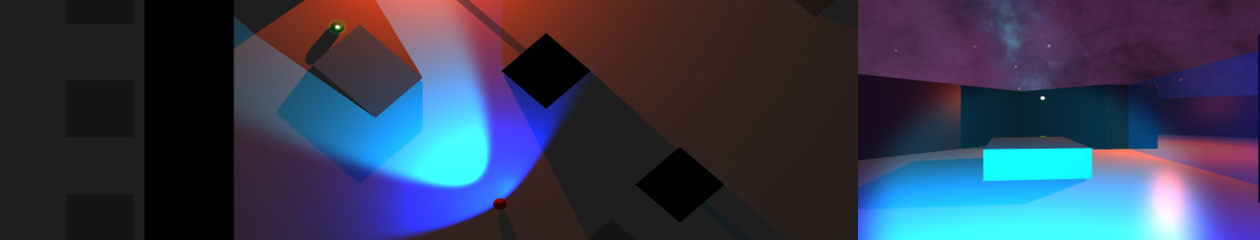

During the initial stages of this project I was considering what engine (if any) to use. As I felt that a 2D demonstration would be sufficient, the idea of writing the whole project in C++ with the aid of a graphics library was tempting. My decision to use Unity was based both on my previous experience with it, and a lack of experience with Unreal. During the creation of the first prototype I was quickly forced into using a full 3D scene, to take advantage of Unity’s inbuilt path finding and allow true ray-casting for line-of-sight checks.

During development Unity 5 was released along with the previously Pro only feature of real-time shadows. Initially this was only added for the aesthetics of representing the A.I.s sight, and perhaps moving more towards the look of Metal Gear Solids Soliton Radar.

During the showcase it became clear that visually representing sight through lights and the shadows they create was giving people a clear understanding of the games primary mechanics. Though previous 2D concepts had seemed clear to me, it would have been far more to difficult to explain. The additions of the 1st person view and mini-map were also positively received.

Programming a functioning ART Network provided a significant challenge throughout the semester, even though I was unable to implement a functioning ART2 network – I was able to modify the existing ART1 implementation for this project.

A compromise that had to be made later on was the design of the ART networks input vector. Initially I had hoped to implement a system which could accept a variable length input and re-order it to better suit any matching data. The ART1 implementation would not allow for this, to do so would have required the removal of parts of the system that define it as an ART Network. Therefore I was forced to design a fixed input structure to suit this projects implementation.

The previously built DBSCAN algorithm was added to allow the A.I. to search the environment. The test scene contains a list of positions which the A.I. must search, once every location has been visited the list would be refreshed. Each position contained a set radius – which would be used to generate a DBSCAN of the remaining area. This search would highlight any clusters as new navigation points to be visited before the A.I. can move on to the next area.

As the final build requires a 360 controller, this video demonstrates the main mechanics of the project.