During the final integration process, it was necessary to finalise the details of the test environment. Structurally mostly unchanged since the conversion to Unity 5, the final details remaining represented exactly what the A.I. would be capable of ‘seeing and hearing’.

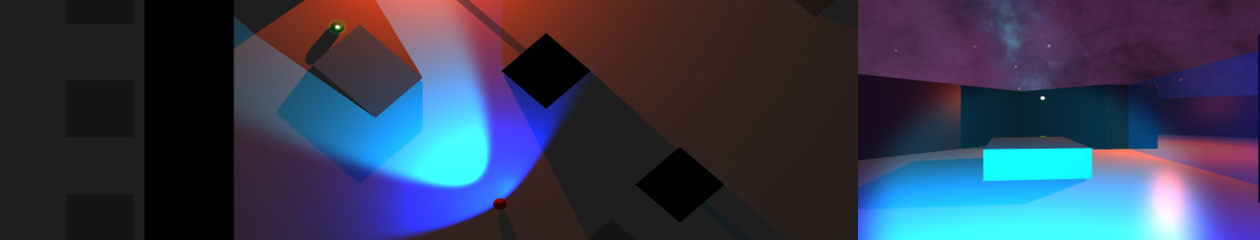

As a combination of the previously pitched ‘static’ sound emitters, I decided to place four static ‘decoys’. The player can only activate one at any time, and only when with a certain proximity – the button to activate is shown over the decoy if it can be turned on. This will represent both an audio and visual contact to the A.I. – continuously broadcasting an audio contact, and a visual contact when within the A.I.’s line-of-sight (now represented by blue arcs). The decoy stays active for a set amount of time, and is visually represented as a pair of rotating orange spotlights.

From the beginning the idea of a player launched contact had been planned. This has been implemented in the form of a green flare – launched directly from the player in the chosen direction. The range is fairly short, the player can throw over the ‘low’ (lighter grey obstacles), but not through anything higher. Representing a player-like decoy, the flare also both functions as a constant audio and visual contact – lasting a set amount of time, and only one allowed active at a time. It however is thrown, and rolls – as such is capable of movement like the player.

The final added piece of functionality was to allow the player to crouch – this will allow the player to both break LOS using low obstacles, and move silently (but slower than running). The idea being to allow some room for a player to duck behind an obstacle and break contact with the A.I. At this time I’m undecided whether I should allow the player to throw flares while concealed behind a low obstacle. It remains at this time, game play balance was never a focus for this project – just the possibilities of messing with the A.I.