For our Procedural Methods module, I decided to explore terrain height map generation – specifically using the Diamond-Square algorithm.

Source code – C++.

As this was based off last semesters Shader Programming submission, again the Rastertek code is not present.

Rather than start off by using code online, I decided to approach the implementation by researching the algorithm. Though I certainly made a few mistakes along the way, and its also certainly not the most correct or efficient method (if there is such a thing). This proved to be an interesting piece of coursework.

Sadly I believe my own diagram is slightly wrong…

2 and 4 of the 2nd iteration of the diamond stage are the wrong way round.

One of the techniques introduced in the previous semesters Shader Programming was Post-Processing. I’d guess more than a few did not include any for that submission – as it was a requirement for this one.

I set my sights on implementing a depth based blur effect, and I believe I got pretty close to getting the depth information stored as a render to texture.

In the end this Photoshop’d image was a reminder of the failed goal.

Instead I opted to modify the existing blur process – by adding a radial component. This was achieved rather sneakily by abusing the existing texture coordinates.

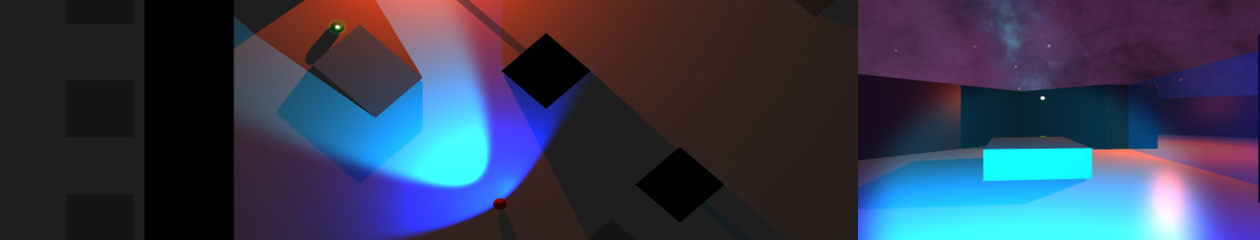

The blur effect off and on.

Even considering I have opted to skip the down and up scaling stages of the blur method (and therefore rendering the whole scene again twice), the impact on performance was pretty severe.