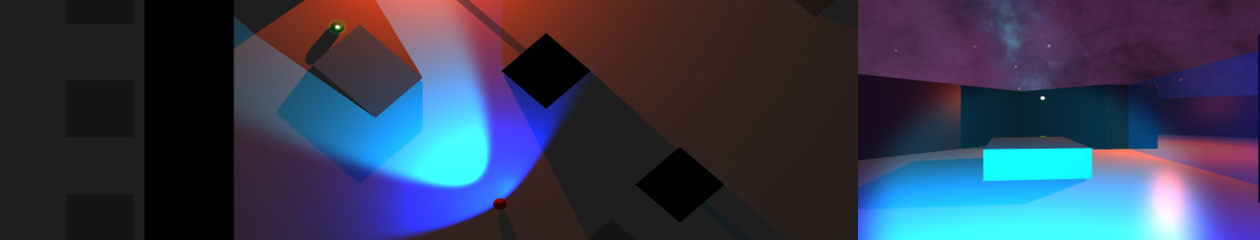

The tileset shown previously proved to have a few flaws and has been reworked slightly, but now initial functionality has been added towards implementing the required search algorithm.

Red gizmos represent those within a radius that have been highlighted to be searched, green gizmos represent those that can currently be ‘seen’ by the object. Currently this only represents the primary vision arc, and does not factor in obstacles (but that functionality has been implemented elsewhere).

Using the arc meshes for such detection proved troublesome/impossible as the project is actually in 3D rather than 2D. The work-around was:

// Get the normalised forward vector of the ‘entity’ object

Vector3 sight = forward.normalized;

// Get the normalised direction vector from the entity to this search node

Vector3 distanceVector = mSearchNodes[ i ].transform.position – center;

Vector3 direction = distanceVector.normalized;

// Dot product returns 1 (same direction), to 0 (90 degrees)

float cosine = Vector3.Dot( sight, direction );

// Use arc cos and convert from radians to degrees

float degrees = Mathf.Acos( cosine ) * Mathf.Rad2Deg;

The intended angles were about 40 degrees (primary) to 140 degrees (peripheral) achieved if the returned value was less than half of either value.

An early version of the area system has also been implemented – checking if a search area is within the radius before doing the calculation above for each search node within. Currently this relies on collider checks (returning obstacles and walls as well), but does cut down on unnecessary checks.